A few months ago, Google shared that AI is now writing 50% of their new code. My first reaction: that’s incredible. My second reaction: wait, what does that actually mean?

I’m genuinely excited about this milestone. I spent the last few years thinking deeply about generative AI, from co-founding Amazon Q Developer (formerly CodeWhisperer) to building the strategy for AI assisted development at a Fortune 100 company. I believe we’re at an inflection point for software development. But as someone who’s been measuring developer productivity for 25+ years, I know that headline numbers rarely tell the whole story.

So let’s dig into what this 50% actually means, what it tells us about the future of software development, and what questions we should be asking instead.

Two Very Different Realities

Here’s what makes Google’s announcement interesting: 50% AI-generated code could represent two completely different realities.

The optimistic scenario (and I think this is what’s happening at Google):

Developers spend less time writing boilerplate and more time thinking about architecture, design, and solving genuinely hard problems. They ship faster, with higher quality, and find their work more fulfilling. The AI handles the repetitive, well-understood patterns while humans focus on creative problem-solving—the work that requires judgment, context, and domain expertise.

In this world, the 50% represents liberation. Developers are freed from the cognitive overhead of remembering syntax, writing scaffolding code, and implementing patterns they’ve done a hundred times before. They spend their mental energy on the problems that actually matter: How should this system scale? What’s the right abstraction? How do we make this maintainable for the next five years?

The scenario to avoid:

Teams generate code faster than they can thoughtfully review it. Technical debt accumulates at unprecedented speed because it’s so easy to produce code without considering long-term implications. The volume of code increases, but the value delivered doesn’t. Developers spend more time reviewing, debugging, and maintaining AI-generated code than they saved by using AI in the first place.

In this world, the 50% represents a trap. The AI becomes a force multiplier for bad decisions, not good ones. Teams ship faster but break more. Velocity goes up while quality goes down. The codebase becomes harder to understand, harder to change, harder to maintain.

The number alone tells us nothing about which reality we’re in.

The Real Question Nobody’s Asking

The real question isn’t “how much code is AI writing?”

It’s “what are developers doing with the time AI gives back to them?”

This is the question that separates transformation from mere automation.

If AI is writing 50% of Google’s code, that theoretically frees up the developer time that used to go into writing that code. So where is that time going now?

Are developers:

- Thinking more deeply about architecture? Spending the saved time on design reviews, architectural discussions, and making better long-term decisions?

- Understanding user needs better? Using the time to talk to customers, understand pain points, and ensure what they’re building actually matters?

- Mentoring junior developers? Investing in the next generation, sharing context and judgment that AI can’t teach?

- Reducing technical debt? Finally addressing that backlog of refactoring, documentation, and maintenance work that never gets prioritized?

- Innovating on hard problems? Tackling the challenges that have been waiting for someone to have enough time to think deeply about them?

Or are they just reviewing more AI output?

This distinction matters enormously. Because if developers are spending their “saved” time reviewing AI-generated code, debugging AI mistakes, and fixing AI-introduced bugs, then productivity hasn’t actually improved—it’s just shifted from writing to reviewing.

What We Should Measure Instead

Here’s what I learned from 25 years of building developer tools and measuring their impact: the metrics everyone tracks are rarely the metrics that actually matter.

Lines of code written? Terrible metric. We learned this in the 90s.

Story points completed? Vanity metric that optimizes for activity, not outcomes.

Features shipped? Better, but still doesn’t tell you if you’re building the right things.

The metrics that actually matter:

1. Time to shipped value: How long does it take to go from “we should build this” to “customers are using this”? This measures the entire pipeline—ideation, design, implementation, testing, deployment, adoption. If AI is genuinely helping, this number should drop significantly.

2. Customer impact: Are you solving more customer problems? Are adoption rates higher? Is customer satisfaction improving? The code is just a means to an end. The end is customer value.

3. Developer satisfaction: Are your developers happier? Do they find their work more fulfilling? Are they spending time on the parts of their job they find most valuable? Developer retention and engagement are leading indicators of sustainable productivity.

4. Code quality in production: Are defect rates going down? Is Mean Time To Recovery (MTTR) improving? Is the codebase becoming easier to maintain, or harder? Quality over time is the real test of whether AI is helping or hurting.

5. Architectural coherence: As you scale the codebase, is it becoming more coherent or more fragmented? Can new team members understand it? Can you make sweeping changes without breaking everything? This is where AI’s tendency toward “works but messy” really shows up.

6. Technical debt trajectory: Is technical debt increasing, decreasing, or staying constant? This is the ultimate measure of whether you’re building sustainably or just going fast now and paying later.

The 50% number Google shared? It’s an input metric. I’m far more interested in the output metrics.

What Google’s Announcement Actually Tells Us

Here’s what I think is significant about Google sharing this number publicly: confidence.

They’re confident enough in their process to put this stat out there. That suggests they’re seeing the outcomes that matter—faster shipping, maintained (or improved) quality, happy developers, successful products.

Google isn’t new to measuring developer productivity. They’ve been doing it longer and more rigorously than almost anyone. If they’re comfortable saying “AI writes 50% of our code,” they’ve almost certainly validated that this is producing positive outcomes across their output metrics.

That’s genuinely exciting. It suggests we’re past the experimental phase and into the “this actually works at scale” phase.

But (and this is important) what works at Google might not work everywhere. Google has:

- Exceptional engineering discipline

- Rigorous code review processes

- Sophisticated testing infrastructure

- A culture of measuring everything

- Resources to invest in the tooling and training needed to do this well

Most companies don’t have all of these. Which means most companies need to be thoughtful about how they adopt AI coding tools and what they measure to ensure it’s actually helping.

The Uncomfortable Questions

If you’re leading engineering teams and adopting AI coding tools, here are the questions you should be asking—even if they make you uncomfortable:

Are we measuring the right things? If you’re tracking “AI code acceptance rate” or “percentage of AI-generated code,” you’re measuring the wrong things. Track outcomes, not activity.

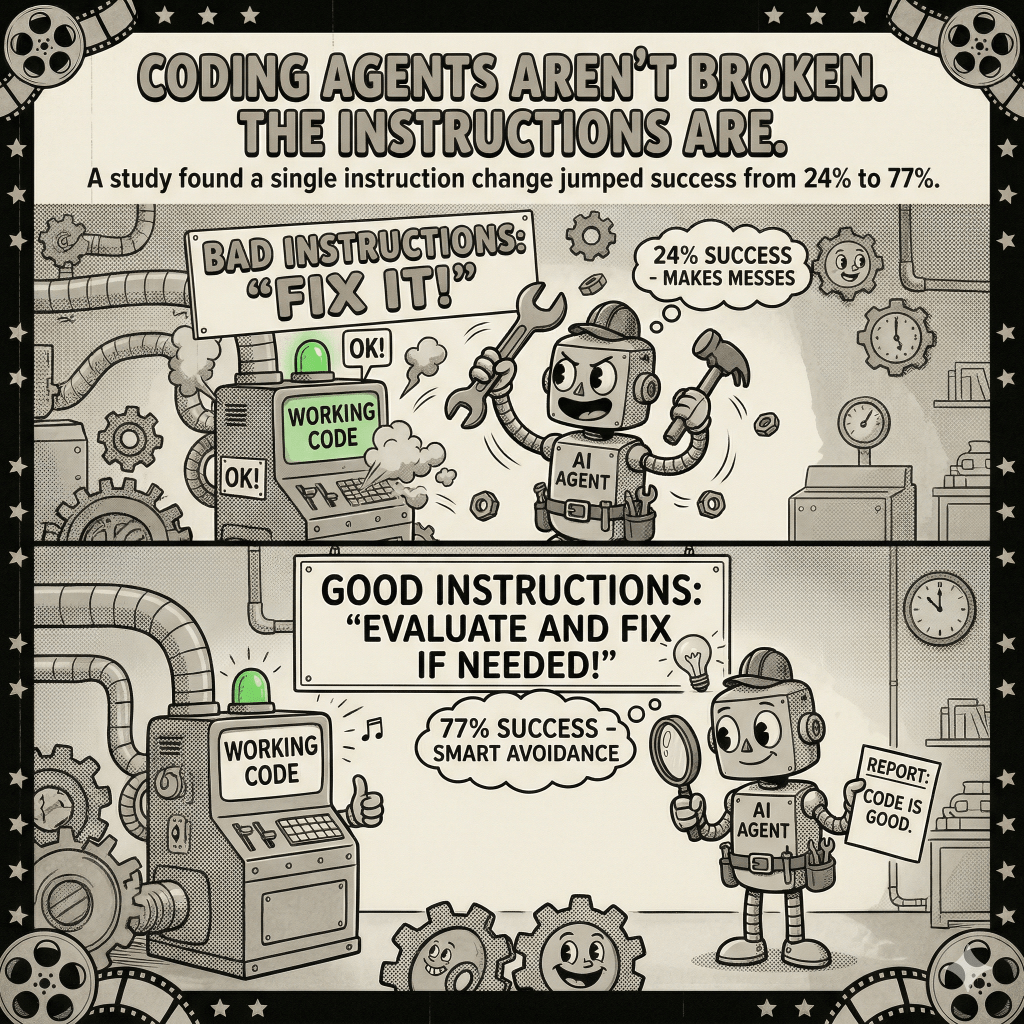

Are we reviewing AI code differently? AI makes different kinds of mistakes than humans do. It’s confident, consistent, and often wrong in subtle ways. Are your code reviews adapted to catch these?

Are we creating more technical debt? It’s easier to generate code than to think about whether it’s the right code. Are you shipping faster but building a maintenance nightmare?

Are our developers actually happier? Are they spending time on more fulfilling work, or are they just reviewing more AI output? Developer satisfaction is a leading indicator of whether AI is helping or hurting.

Do our junior developers still learn? If AI is writing most of the code, how do junior developers develop the skills and judgment they need? Are we inadvertently creating a generation gap?

Are we building the right things? The biggest risk isn’t that AI writes bad code. It’s that AI makes it so easy to write code that we stop asking whether we should be writing that code at all.

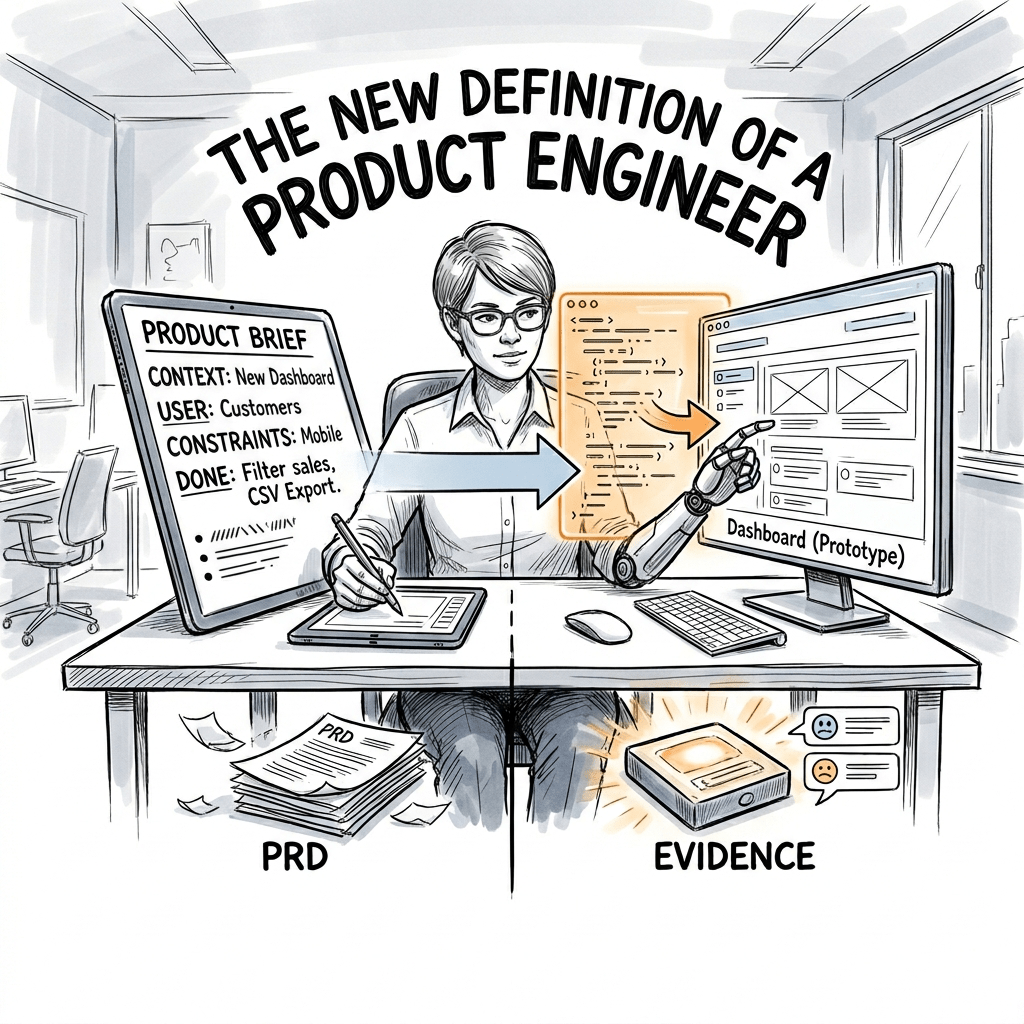

The Bigger Shift: From Writing Code to Directing Code

Here’s what I think is really happening, and why Google’s 50% matters:

We’re in the middle of a fundamental shift in what it means to be a software developer.

For the last 50 years, being a developer meant writing code. You thought about what needed to happen, and then you wrote the code to make it happen. The cognitive work and the typing work were tightly coupled.

AI is decoupling them.

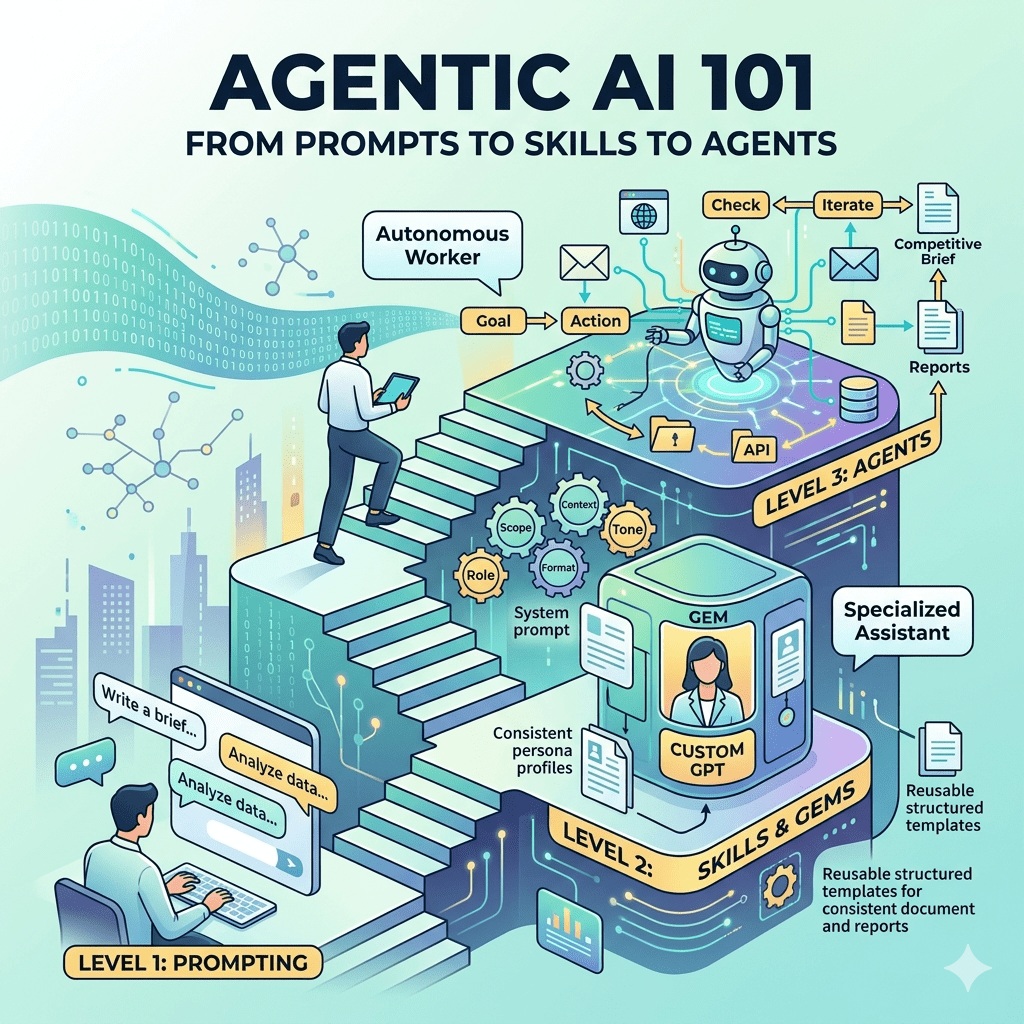

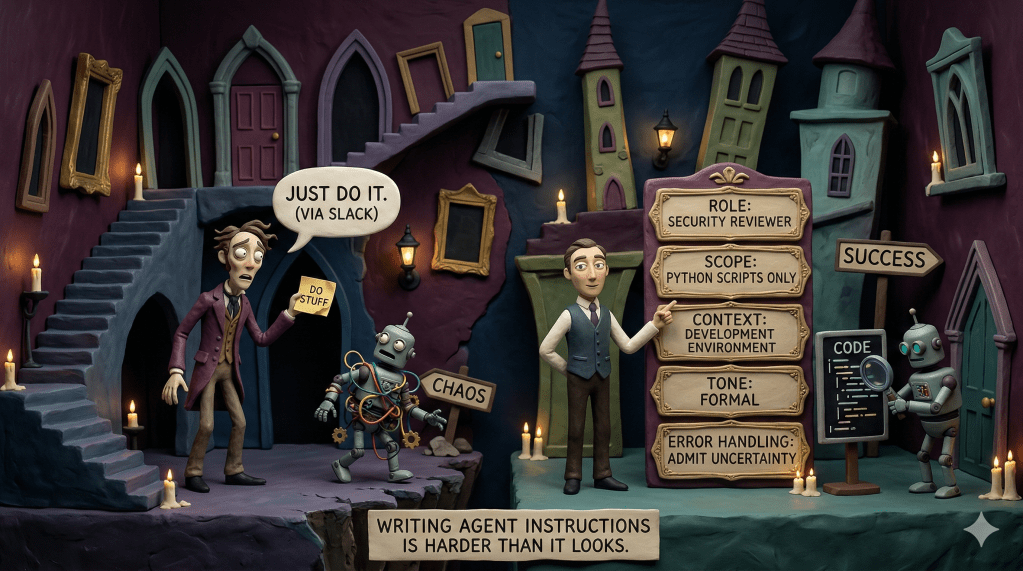

Increasingly, being a developer means directing code creation. You think about what needs to happen, you describe it clearly (in natural language, in specs, in tests), and AI generates the implementation. You review, refine, and ensure it’s right.

This is a profound shift. It means:

- Writing skills become less important, specification skills become more important. Can you clearly articulate what you want? Can you write tests that define correct behavior? Can you create specs that an AI (or a junior developer) can implement?

- Code reading becomes more important than code writing. If AI is generating code, your job is to review it critically, understand what it’s doing, and ensure it’s correct. That requires strong code reading and comprehension skills.

- Architectural thinking becomes the differentiator. Anyone can generate working code. Not everyone can design systems that are maintainable, scalable, and coherent. This becomes the high-value skill.

- Judgment becomes the scarce resource. AI can generate options, but it can’t tell you which option is right for your context, your constraints, your users, your long-term strategy. Human judgment is what separates good code from great systems.

Google’s 50% suggests they’re successfully navigating this transition. They’ve figured out how to have AI handle the implementation while humans focus on specification, review, architecture, and judgment.

That’s the goal. That’s what we should all be working toward.

What This Means for Product Leaders

As someone who leads product organizations, here’s what I’m thinking about:

1. We need new competencies: Product Managers and Engineering leaders need to understand how to work with AI-augmented teams. What does sprint planning look like when implementation time drops by 50%? How do you estimate? How do you prioritize? What skills do you hire for?

2. We need different metrics: If you’re measuring productivity by story points or velocity, AI will break your metrics. You need to measure outcomes, like customer value delivered, quality maintained, technical debt managed.

3. We need to rethink onboarding: If junior developers aren’t writing as much code, how do they learn? How do they develop judgment? This is a huge concern in the enterprise space. We need to be intentional about creating learning opportunities in an AI-augmented world to ensure we have the next generation of engineers, even if their job looks different.

4. We need to invest in process: AI doesn’t eliminate the need for good engineering practices—it amplifies them. Teams with rigorous code review, comprehensive testing, and clear architectural standards will thrive with AI. Teams without these will struggle.

5. We need to think about technical debt differently: AI makes it easier to accumulate technical debt because it removes the natural brake of “this is tedious to write.” You need explicit processes to prevent this.

The Opportunity Ahead

Here’s why I’m optimistic about Google’s announcement and what it signals:

We’re at the beginning of a fundamental transformation in how software gets built. Not the end. The beginning.

The teams and companies that figure this out—that learn how to effectively collaborate with AI, measure the right things, and focus human effort on high-value judgment rather than low-value implementation—will have an enormous advantage.

This isn’t about AI replacing developers. It’s about AI enabling developers to work at a higher level of abstraction, to spend more time on problems that require human creativity and judgment, and to ship value to customers faster.

But getting there requires intention. It requires measuring outcomes, not activity. It requires investing in process, not just tools. It requires developing new skills and new ways of working.

Google’s 50% is a milestone. It shows that this future is possible, not just in theory but in practice, at scale, in production, at one of the world’s most sophisticated engineering organizations.

Now the question is: how do the rest of us get there?

What I’m Watching

As I continue building with AI tools (I use Claude Code and GitHub Copilot daily on side projects, and I’m leading developer AI adoption strategy at Capital One), here’s what I’m paying attention to:

How does code quality trend over time? Is the codebase becoming easier or harder to maintain? This is the ultimate test.

Where is developer time actually going? Are we creating time for high-value work, or just filling it with different low-value work?

How are junior developers developing? Are they learning the judgment they need, or are they becoming dependent on AI without understanding why things work?

What new failure modes are emerging? AI introduces new kinds of bugs and new kinds of technical debt. What are they, and how do we catch them?

What skills are becoming more valuable? In an AI-augmented world, what separates great developers from mediocre ones?

The 50% is just the beginning. The real story is what happens next.

What are you seeing? If you’re using AI coding tools, I’d love to hear: What outcomes are you tracking? What’s working? What’s not? What are you learning?

Hit reply or comment below. I read and respond to everything.

…D7

Leave a comment