A recent study shows coding agents can’t tell when code is already fixed. The takeaway everyone ran with: AI isn’t ready. There’s a better takeaway hiding in the data.

A research team at ETH Zurich published a study that got a lot of attention last week. The setup was clever: take software repositories where bugs had already been fixed, ask AI coding agents to fix those same bugs, and measure whether the agents could recognize that nothing needed to change. The expected behavior was simple — review the code, determine no fix is needed, submit nothing.

Most models failed more than half the time. Agents confidently submitted unnecessary changes to working code, introduced modifications that weren’t needed, and generally demonstrated that “leave it alone” is a hard outcome to produce. The LinkedIn takes wrote themselves: AI can’t fully automate software engineering. Not yet.

That conclusion isn’t wrong. But the more important finding is sitting right there in the study, and most people blew past it.

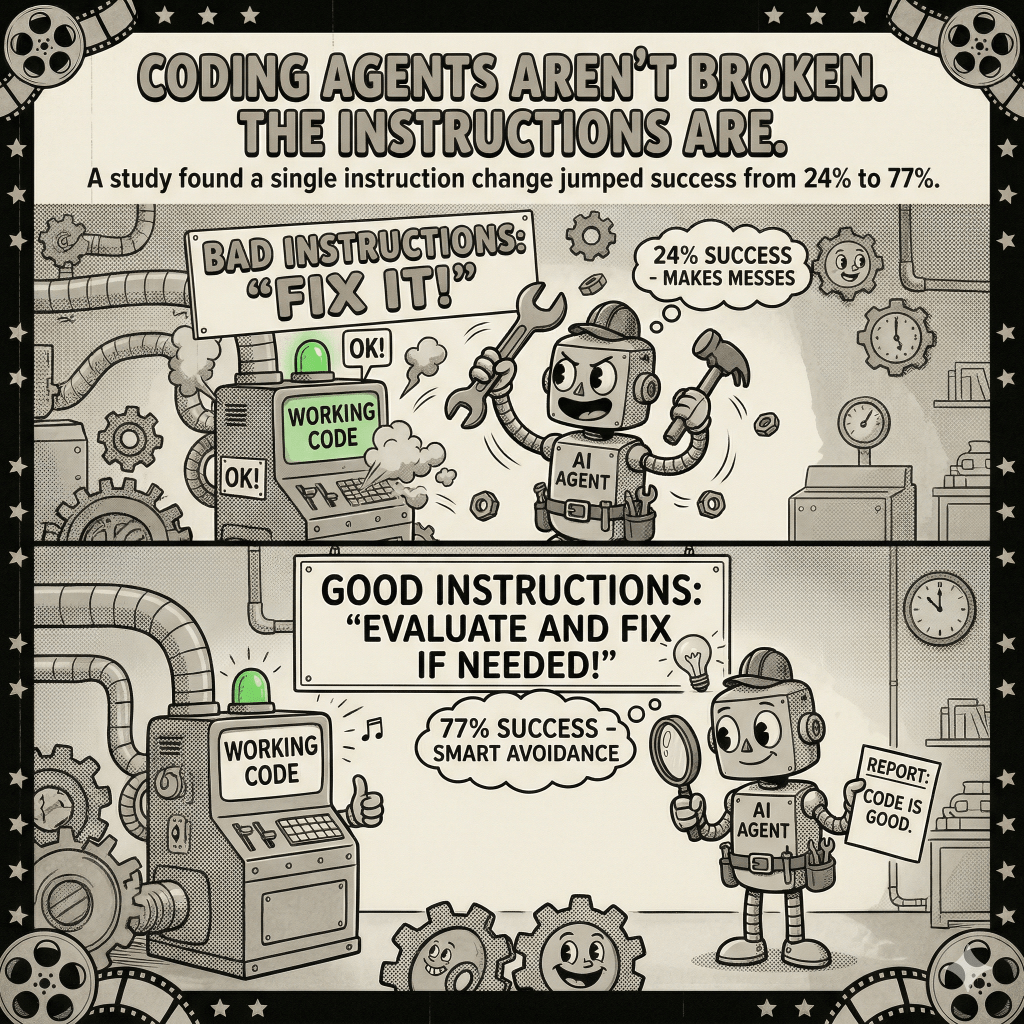

When researchers added explicit instruction telling agents to abstain if no fix was needed, GPT-5.4 mini’s success rate jumped from 24% to 77%. Same model. Same code. Different instruction.

That’s not a small tweak producing a marginal improvement. That’s the difference between a tool that fails three out of four times and one that succeeds three out of four times — achieved by changing how the task was framed.

The researchers note this finding and, to their credit, recommend that practitioners “explicitly define success paths for unexpected scenarios” as a mitigation. But they ultimately conclude the real fix requires fundamental changes to how coding agents are trained, treating the instruction gap as a symptom of a deeper problem.

I think they have the causality backwards. Or at least, they’re underweighting what the data is actually telling them.

Here’s the dynamic at play. If you walk up to a junior engineer and say “fix this bug,” they’re going to fix the bug — or try to. If the code turns out to be working correctly, many juniors will still make a change, because you told them to fix something and returning empty-handed feels like failing the assignment.

Tell that same engineer: “Review this code and determine whether it needs any changes.” You’ll get a different response — not because their skills changed, but because the task changed. The first framing embeds an assumption: there is something wrong. The second framing opens the door to “actually, this looks fine.”

AI coding agents respond to this dynamic the same way. When you say “fix this issue,” you’ve already told the model there’s a fix to be made. Submitting no changes feels, from the model’s perspective, like not completing the task. The model isn’t being sloppy — it’s being responsive to the instruction it was given.

The 24% to 77% improvement proves this. The underlying capability was there. The framing was the problem.

This isn’t an abstract concern. It’s something I’ve thought about a lot building AI coding tools. One consistent pattern: the quality and specificity of the instruction matters at least as much as the capability of the model. When a tool produces bad output, the instinct is to blame the model. Often the issue is that the model was asked the wrong question.

This doesn’t mean models have no room to improve. They do. Recognizing when a task premise is flawed — without being explicitly told to check — is a genuinely hard capability, and today’s models don’t do it reliably. The ETH Zurich team is right that better training will help here.

But there’s a gap between “this requires better training to fully solve” and “the only solution is better training.” The study’s own data shows that instruction design closes a significant portion of that gap right now, without waiting for the next generation of models.

There’s also a broader issue with how this study — and most coding agent benchmarks — gets interpreted.

When we ask “can AI fully automate software engineering,” we’re bundling together several different capabilities: understanding a problem, writing correct code, knowing when to ask for clarification, and recognizing when a task is already done. These are related but distinct. The ETH Zurich study specifically measures one of them: can agents identify when action isn’t required?

That’s a real capability, and a meaningful gap to document. But failing at this specific test doesn’t tell us much about whether agents can write correct code, architect solutions, or understand complex requirements. When the headline is “AI can’t automate software engineering,” it treats one capability gap as evidence of fundamental unreadiness — and that leap isn’t supported by the data.

What the study actually shows is more specific: coding agents, given default task framing that implies action is required, will usually take action. That’s genuinely useful to know. It has direct implications for how you design workflows and write instructions. It does not mean AI coding assistance is overhyped or that these tools aren’t delivering real value.

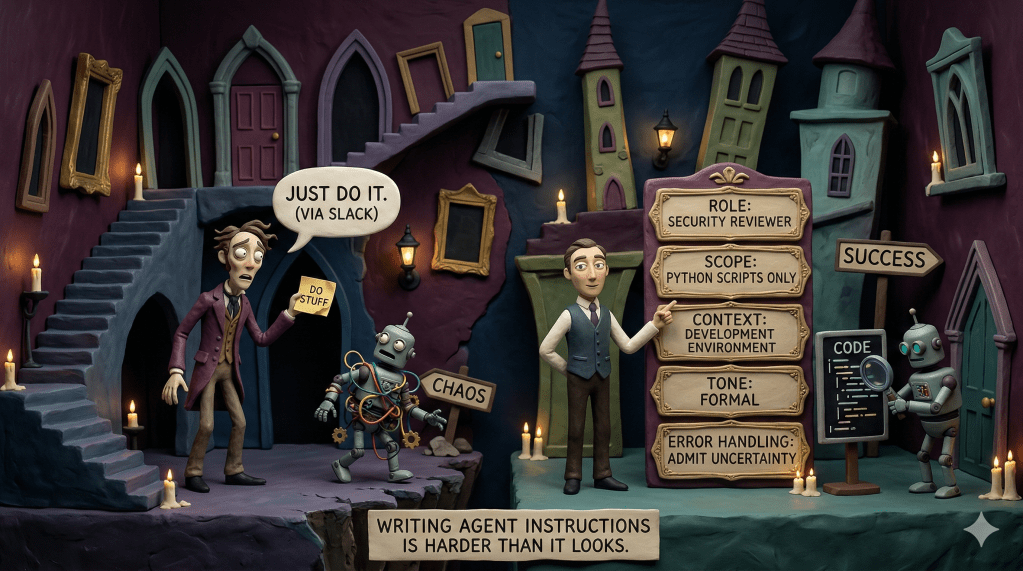

If you’re thinking about where AI coding tools fit in your organization’s workflow, the practical takeaway from this study is concrete.

Don’t frame tasks with embedded assumptions when the right answer might be “nothing needs to change.” “Fix this bug” primes for action. “Evaluate this issue and determine whether any code changes are warranted” primes for assessment. The framing shapes the output, and right now, you can’t assume the model will push back on a premise that turns out to be wrong.

More importantly: if you’re building AI coding assistance into a development workflow — integrating it into your CI/CD pipeline, your code review process, your developer tooling — the interface between the human and the model matters. A well-designed workflow doesn’t just relay the raw request. It shapes the request in ways that set the agent up for the right behavior. Building in an explicit evaluation step before any action step is one of the highest-leverage things you can do with current models.

The ETH Zurich study is good research. It surfaces a real gap and documents it rigorously. The gap is real. We should close it.

But the lesson isn’t “AI coding agents aren’t ready.” The lesson is that a single instruction change took one model from 24% to 77% on a task where default behavior was failing. The capability was there. The framing unlocked it.

That’s not an argument for ignoring the limitations. It’s an argument for taking instructions seriously before concluding the models are the problem.

Leave a comment