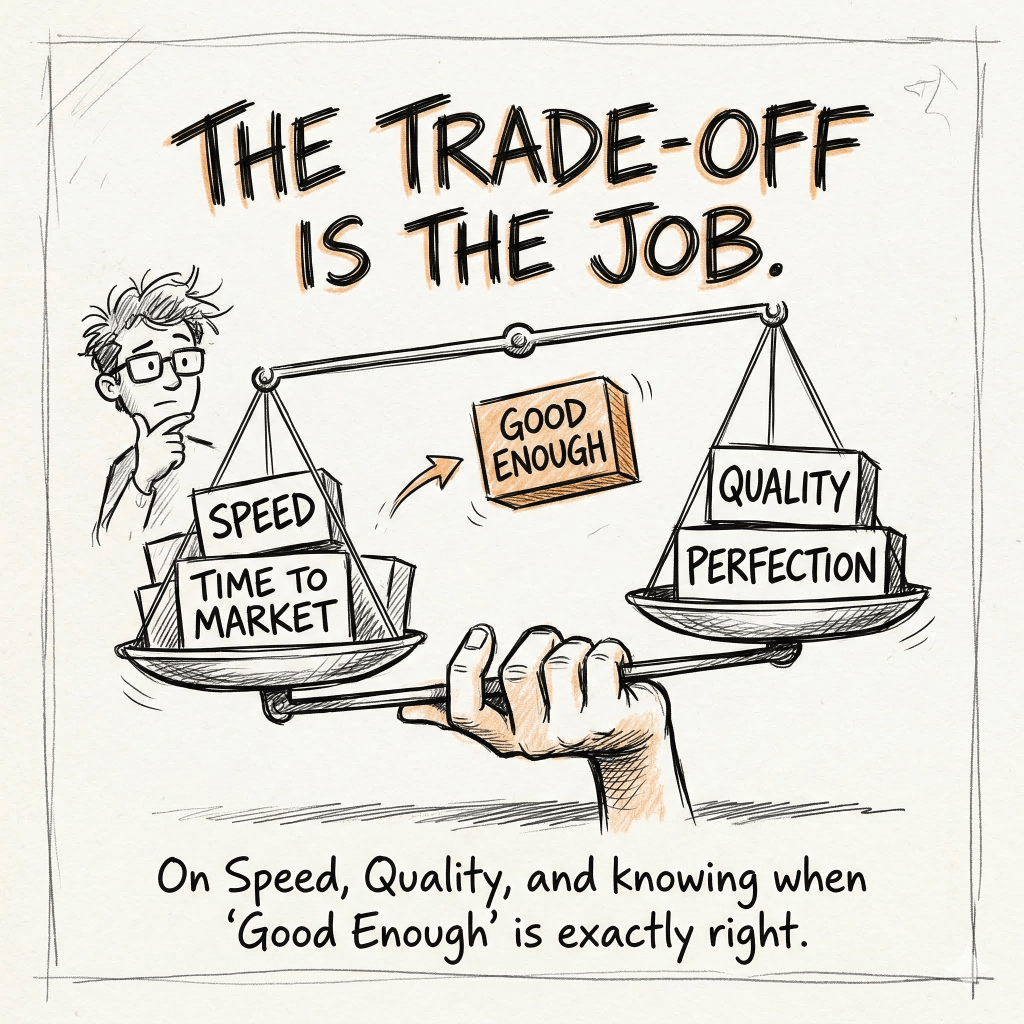

On speed, quality, and knowing when “good enough” is exactly right

Every PM eventually faces a moment where the right answer feels like the wrong answer.

You’ve built something. Your team has poured months into it. It’s good. It’s getting better. And then the market shifts, or a competitor moves, or you look at the calendar and realize the window you thought you had is smaller than you thought.

What do you do with the thing you’ve built?

This is the part of product management that doesn’t fit neatly into frameworks. Trade-offs between speed and quality, between proprietary technology and commercial solutions, between the product you want to ship and the product the market needs right now. These decisions don’t have a formula. They have judgment, experience, and sometimes a walk across a parking lot.

The question PMs don’t answer honestly enough

When I talk to PMs about prioritization, most of them talk about impact and effort. That’s fine. Impact and effort matter. But the calculation that actually determines what you build versus what you defer versus what you kill is harder than a 2×2 matrix.

The real question is, what happens if you don’t ship this now?

Not “how valuable is this feature?” but “what is the cost of not having this feature while your competitors do?” Those are different questions. The first is about potential. The second is about survival.

Most PMs anchor on the first question. The best ones obsess over the second.

Market windows are real. They open, they narrow, and sometimes they close. The cost of shipping something imperfect into an open window is almost always lower than the cost of shipping something perfect into a closed one. But knowing when the window is closing, and being willing to act on that, requires a different kind of confidence than most product roadmap discussions encourage.

Speed to market doesn’t mean shipping garbage. It means being ruthlessly honest about which things matter right now versus which things are nice to have. “Good enough” isn’t a cop-out. It’s a strategy, when you know what you’re trading and why.

What “good enough” actually means

Here’s where a lot of PMs get this wrong. They treat “good enough” as a quality threshold, some floor below which you won’t ship. And there’s truth in that. You’re not shipping broken authentication, you’re not shipping data loss bugs, you’re not shipping things that will actively erode trust.

But “good enough” is really about fit. Does this product, at this quality level, do the job the customer needs done in this moment? The bar isn’t absolute. It’s relative to the alternative.

If your competitor is shipping something that does 80% of what you’re planning to ship, and they’re in market today while you’re still polishing, your customers aren’t waiting for you to finish. They’re evaluating what’s available. They’re forming habits. They’re integrating tools into their workflows.

The longer you stay out of market, the higher the switching cost you’re asking customers to accept when you finally arrive. That’s a real cost. It compounds.

I learned this firsthand building Amazon CodeWhisperer, which became Amazon Q Developer.

The walk to the PRFAQ

In early October 2023, the VP of Data & AI at AWS and I were walking together from the Nitro North building to the Re:Invent building for a PRFAQ review with our SVP. It was one of those conversations that sounds casual until you realize you’re actually making a decision.

I told him I was worried we would miss our window.

At that point, we had built our own LLM for fast, in-line code generation. That model was good. It was specifically trained for the thing it did. But we were also training a larger conversational LLM for chat-based coding, and we were watching GitHub Copilot accelerate in ways that were hard to ignore. GitHub had OpenAI behind them. We were building our conversational model from scratch. The gap wasn’t closing.

There’s a cultural dynamic at AWS that anyone who’s worked there knows well – Not Invented Here is practically a value. If it wasn’t built inside Amazon, it’s suspect. There’s good reason for that instinct. AWS built incredible technology by solving hard problems internally rather than accepting limitations imposed by someone else’s roadmap. I respect that culture. But like most instincts, it can become a liability when conditions change.

The conditions had changed.

Anthropic was building the best conversational LLMs in the industry. We had watched Claude get better at a rate that our internal training timeline couldn’t match. The question wasn’t whether Anthropic’s models were good enough. They were clearly excellent. The question was whether AWS’s cultural preference for proprietary technology was worth the cost of watching GitHub extend its lead.

I walked through all of this with the VP – the GitHub trajectory, our current pace, what it would realistically take to catch up on our own timeline. And then I brought up Amazon’s $4 billion investment in Anthropic.

The Anthropic card

Amazon and Anthropic had announced a strategic collaboration to advance generative AI. The deal centered on AWS as Anthropic’s primary cloud platform, hosting their models through Amazon Bedrock. At the time, that arrangement was designed for external customers. It wasn’t meant for AWS to build its own products on top of Anthropic’s models.

But there was another dimension to the resource question. GPUs for running LLMs were scarce. We were consuming a significant amount for Amazon Q. Amazon Bedrock was consuming the rest to serve customer-facing workloads. Redirecting Bedrock capacity toward an internal product wasn’t a simple ask. It was a real trade-off against revenue-generating workloads.

The argument I made was that the strategic value of accelerating Amazon Q Developer, making it genuinely competitive with GitHub Copilot, was worth the internal resource friction. That if we wanted to be relevant in the AI coding tools market, we needed to move faster than our proprietary model development timeline allowed. And we had a world-class LLM partner in Anthropic that we weren’t using for our own products.

The VP agreed.

That conversation lasted maybe ten minutes. The decision it contained had been building for months.

The harder conversation

Getting the VP’s sign-off was the easy part. Later that same day, I had to tell our Machine Learning Science team that we were pivoting away from the conversational LLM they had been training and refining.

That is a genuinely awful conversation to have. These were people who had been doing serious, technically sophisticated work. They believed in what they were building. And now I was telling them that we were going in a different direction, not because their work was bad, but because the market wasn’t going to wait for it to be ready.

I’ve had a lot of hard conversations over 25+ years in this industry. This type hits differently, because the people on the receiving end haven’t done anything wrong. Their work was good. The problem was timing and competitive pressure, not quality.

What I tried to do, and what I think any PM owes their team in this situation, is tell the whole story. Not just “we’re doing this now,” but why. What we were seeing in the market. What GitHub was doing with OpenAI. What the window looked like. I didn’t sanitize it. I gave them the honest version of the competitive situation and explained what we stood to gain by making the trade.

But I also tried to show them where their work still mattered, and it did. Our fast, in-line code generation model was still ours, still critical, and still needed to be excellent. The new challenge was making that model and Anthropic’s conversational LLMs work well together. One concrete example, we focused the ML Science team on Retrieval Augmented Generation (RAG) with Anthropic’s models, so that inferencing could include unique information about the specific codebase or documentation a customer was working in. That work was the foundation for Q Customizations. Genuinely hard, genuinely important, and a better use of the team’s expertise than continuing to train a conversational LLM we were already losing ground on. The ML Science team wasn’t being sidelined. They were being redirected toward a problem that was, honestly, more interesting given the new architecture.

The team saw it. Not immediately, and not without some frustration, but they saw it. By switching to Anthropic’s LLMs, we were able to ship chat-based coding in time for AWS re:Invent at the end of November 2023, just two months after that walk (ironic) walk to the Re:Invent building. Amazon Q Developer, powered partly by Anthropic, went on to become the best AI coding tool for AWS workloads. That outcome wouldn’t have happened on the original timeline.

What this actually teaches about trade-offs

The instinct to protect what you’ve built is legitimate. Proprietary technology creates competitive moats. Building internal capabilities compounds over time. There are real, defensible reasons to stay the course on a long-term technical investment.

But those reasons have to be weighed against the cost of staying the course. And the cost is almost never zero.

When I think about how PMs should approach these decisions, a few things stand out from this experience.

First, the trade-off isn’t just about quality. It’s about what you’re trading for. We weren’t trading a great LLM for an okay one. We were trading a not-yet-ready LLM for the best conversational models in the market, so we could compete while the market was still forming. That framing matters. “Good enough” in this case was actually “world-class,” just not ours.

Second, timing changes the calculus. The right decision in October 2023 might have been the wrong decision in October 2022. Market windows, competitive positions, and available options all shift. A trade-off that looks reckless in one context looks obvious in another. Part of the PM’s job is reading the moment, not just the product.

Third, the people carrying the cost of the decision deserve the honest version. I could have softened the conversation with the ML Science team. I could have framed it as a strategic expansion rather than a pivot. But that would have been a disservice to people who were smart enough to see through it anyway. Respecting your team’s intelligence means giving them the real situation, not a managed version of it.

And finally, most trade-off decisions that feel permanent aren’t. We didn’t stop caring about our own models. We redirected focus. The fast code generation model continued to be ours. The architecture question of how proprietary and commercial models work together turned out to be a rich, important problem. Trade-offs create constraints, and constraints often drive better outcomes than unlimited options.

On feature prioritization, specifically

Since this comes up every time someone asks about trade-offs – feature prioritization is mostly a question of clarity about what you’re trying to achieve and honesty about what you’re not.

The frameworks help…impact versus effort, customer value versus strategic value, now versus later. Use them. But no framework survives contact with a real roadmap without human judgment layered on top.

The questions I always ask when a feature list is on the table; What happens if we ship this and nothing else for the next 90 days? What happens if we don’t ship this at all? Which of these features are table stakes for the segment we’re going after, and which are differentiators? Which will someone make a TikTok about or write a tweet about?

That last one matters more than it sounds. Table stakes features don’t generate enthusiasm. They prevent churn. Differentiators generate word of mouth, which generates growth. You need both, but you need to know which is which, because they require different resourcing and different timelines.

Amazon Q Developer needed both table stakes (it had to do basic code completion competently) and differentiators (deep AWS integration, security scanning, the ability to understand AWS-specific patterns). The Anthropic decision accelerated the differentiators by clearing the conversational quality bottleneck. That was the real shape of the trade-off.

Speed versus quality is usually the wrong frame. The real question is always, what are we trading, what are we trading it for, and what does the market look like when we arrive?

Get those answers right and “good enough” becomes a precision instrument, not an excuse.

Leave a comment